Announcing KServe v0.17 - Production-Ready LLM Serving with LLMInferenceService

Published on March 13, 2026

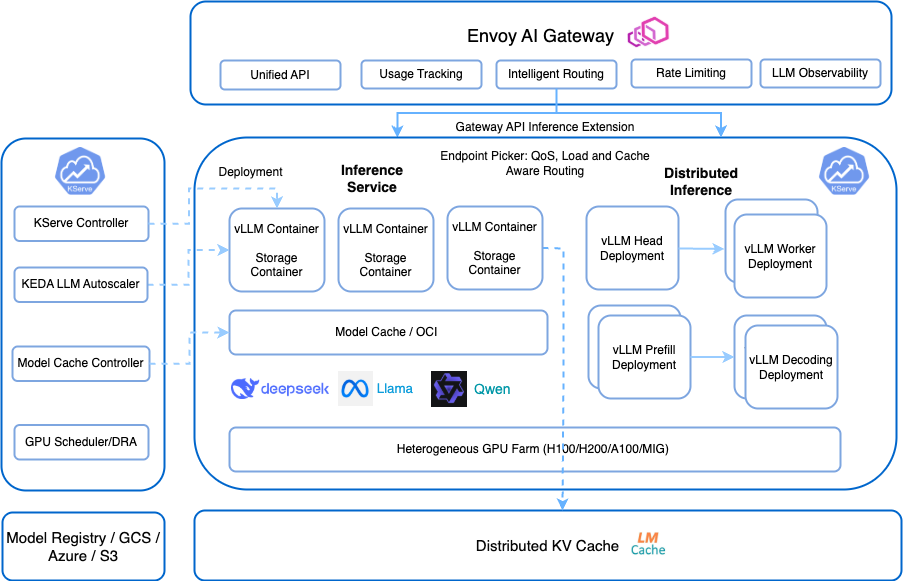

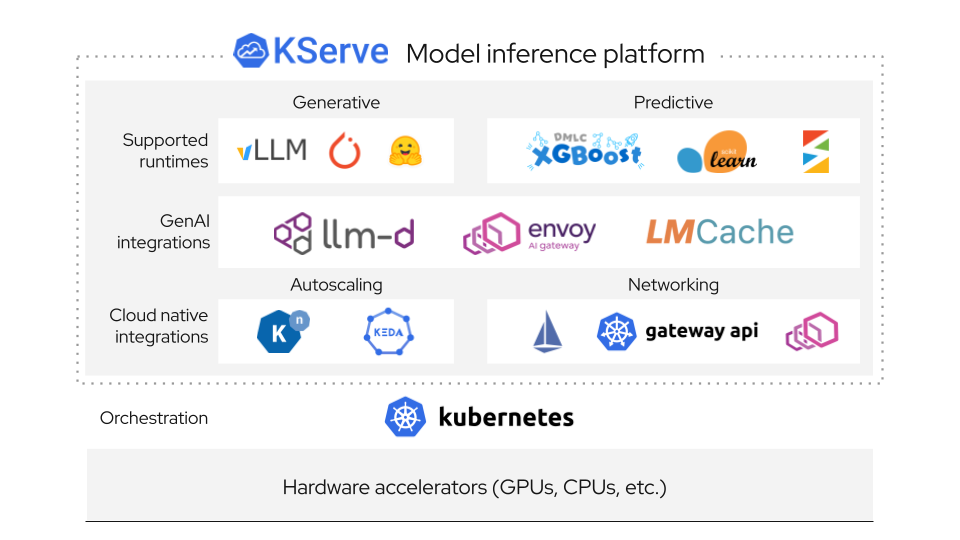

We are excited to announce the release of KServe v0.17, a landmark release that brings LLMInferenceService to production readiness with a GenAI-first architecture built on the llm-d framework. This release introduces KV-cache aware intelligent routing, disaggregated prefill-decode, distributed inference with tensor/data/expert parallelism, Envoy AI Gateway integration with token-based rate limiting, and a completely restructured modular Helm chart architecture.